Image recognition has quietly moved from research labs into everyday systems. It tags photos, guides self-driving cars, scans medical images, and monitors infrastructure at scale. On paper, accuracy numbers often look impressive. In practice, the picture is more nuanced.

Accuracy in image recognition is not a single number, and it does not mean the same thing in every context. A model that performs well on clean benchmark images may struggle in real-world conditions, unusual angles, poor lighting, or complex scenes. To understand how accurate this technology really is, it helps to look beyond headlines and into how accuracy is measured, where it holds up, and where the gaps still are.

This article breaks that down in plain terms, without hype, and with a focus on how image recognition behaves outside controlled demos.

Accuracy in Image Recognition

Accuracy in image recognition does not mean that a system always sees what a human sees. It means that, under defined conditions, a model produces predictions that align with labeled data according to specific rules.

Most systems are evaluated using structured datasets where images are annotated in advance. A model is considered accurate when its predictions match those annotations within accepted thresholds. This already introduces a limitation: models are measured against human labels, not against reality itself.

Accuracy also varies by task. Image classification focuses on identifying what is present. Object detection adds the requirement of locating it. Segmentation goes further by defining precise boundaries. Each step increases complexity and introduces new opportunities for error.

Core Metrics Used in Image Recognition

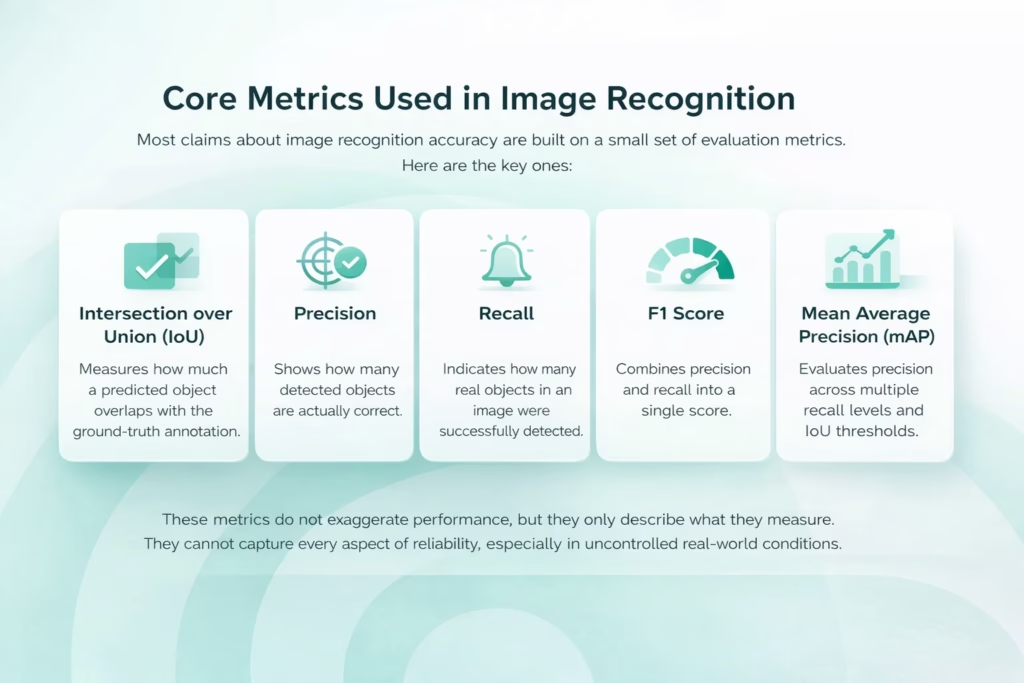

Most claims about image recognition accuracy are built on a small set of evaluation metrics. Each of them captures a different aspect of performance, and none of them tells the full story on its own.

- Intersection over Union (IoU). Measures how closely a predicted object overlaps with the ground-truth annotation. It focuses on spatial alignment, not just whether an object was detected.

- Precision. Shows how many detected objects are actually correct. High precision means fewer false positives.

- Recall. Indicates how many real objects in an image were successfully detected. High recall means fewer missed objects.

- F1 score. Combines precision and recall into a single value. Useful for comparison, but it can hide important trade-offs between false positives and false negatives.

- Mean Average Precision (mAP). Commonly used for object detection. Evaluates precision across multiple recall levels and IoU thresholds. Powerful, but often misunderstood or quoted without context.

These metrics do not exaggerate performance, but they only describe what they are designed to measure. They cannot capture every aspect of reliability, especially when systems move from controlled datasets into real-world conditions.

Image Recognition Accuracy at FlyPix AI

At FlyPix AI, we work with image recognition in real-world geospatial data, where accuracy is tested by scale, complexity, and changing conditions. Satellite, aerial, and drone imagery rarely look clean, so accuracy has to hold up beyond benchmarks.

We focus on making image recognition useful in practice. That means AI agents that detect and outline objects quickly, but also models trained on industry-specific data rather than generic examples. Custom training allows accuracy to reflect how teams actually work, whether in construction, agriculture, or infrastructure monitoring.

For us, accuracy is not a single number. It is consistency over large datasets, reliability over time, and performance that remains stable as projects move from pilots to production. That is the standard we build FlyPix AI around.

Why Benchmark Accuracy Can Be Misleading

High benchmark scores are real, but they can give the wrong impression. Many image recognition systems report excellent results on popular datasets, and it is easy to read that as “problem solved.” The catch is that benchmarks often reward performance in conditions that are cleaner and more predictable than what systems face after deployment.

Benchmarks Often Test the Easy Part

The issue is not that benchmark results are incorrect. It is that many benchmarks are easier than real-world conditions. Images in curated datasets often have clear subjects, familiar viewpoints, and relatively tidy compositions. Lighting is stable, objects are centered, and the weird cases that break models in production show up less often.

When models learn on and are evaluated against that type of data, they get very good at what they see most. Then they meet the real world: different camera angles, messier backgrounds, seasonal changes, motion blur, occlusion, and objects that do not look like the textbook version. Performance can drop sharply, and that drop is rarely visible in headline accuracy numbers.

Image Difficulty Is Uneven, But Metrics Treat It Like It Is Equal

A useful way to think about it is this: not every image is equally recognizable, even for humans. Some images are understood instantly. Others require a second look, more context, or simply more time.

Traditional evaluation treats all images as if they carry the same difficulty weight, which skews what “accuracy” means. Many benchmark datasets are dominated by images that are easy for people to recognize quickly. That matters because models can appear to improve a lot while mainly getting better at the easy end of the spectrum, not the truly challenging cases.

Larger models often show this pattern clearly: strong gains on simpler images and weaker progress on harder ones. So the average score rises, but the gap on difficult, real-world visuals remains stubborn.

Humans and Models Fail Differently

Humans and machines do not approach recognition the same way. People rely on context, memory, and flexible reasoning. Models rely on learned statistical patterns. That difference shows up the moment an image becomes ambiguous, cluttered, or unfamiliar.

Humans can often recover from partial information and still make a good call. Models tend to be more brittle, and when the pattern breaks, the failure can be abrupt. Some newer systems that combine vision and language behave a bit more human-like on unusual inputs, but human-level robustness is still not the norm.

This is also why broad claims that “AI beats humans at vision” usually come from narrow benchmark comparisons. In messy, uncontrolled environments, the story is more complicated, and that is exactly where accuracy matters most.

Accuracy in Real-World Applications

Industrial and Infrastructure Use

In controlled environments, image recognition can be highly accurate. Fixed cameras, stable lighting, and limited object types allow systems to perform consistently. This is common in manufacturing inspection and infrastructure monitoring.

Autonomous Vehicles and Safety-Critical Systems

In dynamic environments like roads, accuracy becomes harder to maintain. Lighting, weather, and unpredictable objects challenge even advanced systems. Here, reliability under stress matters more than average accuracy.

Medical Imaging

Medical image recognition operates under strict requirements. Images are subtle and stakes are high. Even small errors matter. Accuracy improvements are valuable, but systems require careful validation and human oversight.

Surveillance and Security

Surveillance systems face additional challenges related to bias, fairness, and environmental variation. Accuracy may differ across demographics or locations, raising concerns that go beyond technical performance.

Adversarial Weaknesses and Reliability Limits

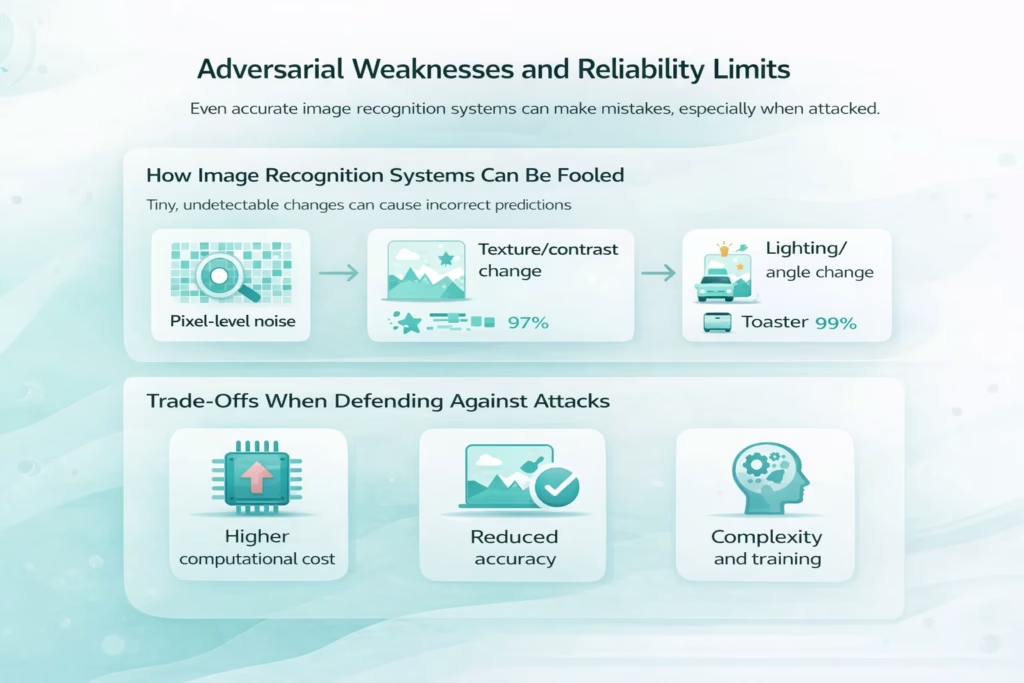

Even highly accurate image recognition systems can fail in unexpected ways. These failures are not always obvious, and they often occur in situations that look trivial to a human observer.

How Image Recognition Systems Can Be Fooled

Small, carefully crafted changes to an image can cause a model to make confident but incorrect predictions.

- Minor pixel-level noise that is invisible to the human eye

- Subtle texture or contrast changes that alter learned patterns

- Slight shifts in lighting, angle, or background composition

- Artificial perturbations designed specifically to confuse models

To a person, the image still looks the same. To the model, it may suddenly belong to a completely different category.

Trade-Offs in Defending Against Attacks

Techniques exist to make models more robust, but they rarely come for free.

- Increased computational cost and slower inference

- Reduced accuracy on clean, non-adversarial images

- More complex training and maintenance pipelines

- Higher deployment and operational costs

Because of these trade-offs, many real-world systems accept a level of fragility rather than aiming for full adversarial resistance.

Why Accuracy Alone Is Not Enough

A system can be accurate on average and still fail at the moments that matter most. Many image recognition models perform well on familiar data but break down when they encounter edge cases, unusual conditions, or scenarios that were poorly represented during training. These failures are not always dramatic. Often, the system continues to operate as if nothing is wrong, producing outputs that look confident but are quietly incorrect.

Because of this, consistency and transparency often matter more than headline accuracy numbers. Teams need to understand how a system behaves when it is uncertain, where its blind spots are, and how errors surface. Responsible deployment depends on knowing not just how often a model is right, but how and why it is wrong when things go off script.

So, How Accurate Is Image Recognition Technology?

In controlled conditions, image recognition technology can be extremely accurate. When tasks are narrow, environments are stable, and data closely matches training sets, performance can rival or even exceed human results. This is why the technology works so well in structured settings like manufacturing inspection or fixed infrastructure monitoring.

In complex, real-world environments, accuracy drops noticeably. Models struggle with rare events, unfamiliar contexts, and shifts in data distribution over time. Progress in image recognition is real, but it is uneven. Accuracy metrics capture part of the story, not the whole picture, and they need to be interpreted alongside context, risk, and real-world behavior.

Conclusion

Image recognition accuracy is not a promise. It is a conditional outcome shaped by data, evaluation methods, and context.

When used carefully, with realistic expectations and proper safeguards, image recognition delivers real value. When treated as infallible, it introduces risk.

The most important question is not how accurate image recognition is in theory, but how it behaves in the specific conditions where it is deployed. That is where accuracy becomes meaningful.

Frequently Asked Questions

Image recognition can be very accurate in controlled environments and well-defined tasks. In real-world conditions, accuracy varies depending on data quality, context, and how closely deployment conditions match training data.

Accuracy reflects how closely a model’s predictions match labeled data under specific evaluation rules. It does not measure understanding, reasoning, or reliability under unexpected conditions.

Many benchmarks contain clean, predictable images that are easier to recognize than real-world data. As a result, models may achieve high scores without being robust to variation, noise, or rare scenarios.

In narrow, repetitive tasks with clear visuals, image recognition systems can outperform humans. In complex, ambiguous, or unfamiliar situations, humans generally remain more reliable.

Common metrics include Intersection over Union (IoU), precision, recall, F1 score, and mean average precision (mAP). Each metric captures a different aspect of performance and should be interpreted together, not in isolation.