Image recognition models rarely fail because the architecture is wrong. They fail because accuracy is misunderstood, measured poorly, or checked in conditions that don’t reflect reality. A model can look impressive during training and still fall apart the moment it meets real data.

Checking image recognition accuracy is not about chasing a single score. It’s about understanding what the model gets right, what it misses, and why those mistakes happen. In practice, accuracy is a mix of metrics, validation discipline, and honest testing against real scenarios. This guide walks through how to evaluate image recognition systems in a way that actually tells you whether they are ready to be used.

Why Overall Accuracy Rarely Tells the Truth

Overall accuracy is the most common metric and also the least informative once projects move beyond toy problems. It measures how often predictions match labels, but it ignores class imbalance, error severity, and distribution shifts.

A model can score very high accuracy by performing well on common, easy cases while consistently failing on rare but critical ones. In real projects, those rare cases are often the reason the model exists in the first place.

Overall accuracy is not useless, but it should be treated as a surface signal. It can indicate whether something is obviously broken, but it cannot confirm that a system is reliable.

Precision and Recall Explain How the Model Actually Behaves

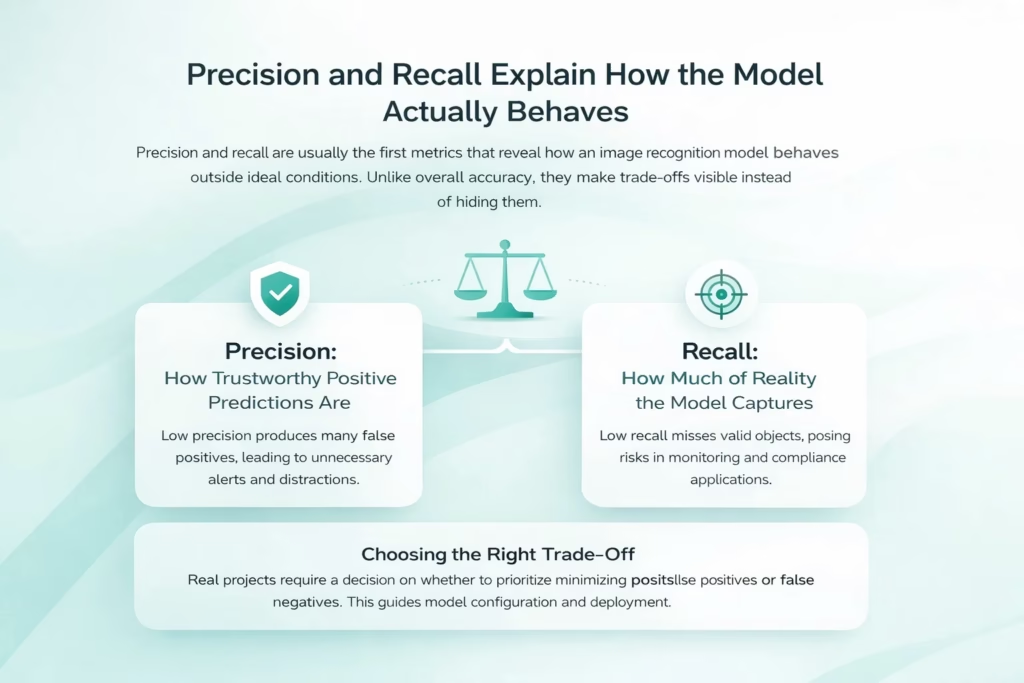

Precision and recall are usually the first metrics that reveal how an image recognition model behaves outside ideal conditions. Unlike overall accuracy, they make trade-offs visible instead of hiding them.

Precision: How Trustworthy Positive Predictions Are

Precision reflects how often the model is right when it makes a positive prediction. Low precision means the system produces many false positives. In real projects, this quickly becomes a problem when each detection triggers an alert, a workflow, or human review. Even a technically accurate model can become unusable if it constantly asks for unnecessary attention.

Recall: How Much of Reality the Model Captures

Recall measures coverage. It shows how much of what is actually present the model manages to detect. A model with low recall misses valid objects, even if the detections it does make are correct. In monitoring, safety, or compliance-related systems, missed detections often carry higher risk than false ones.

Choosing the Right Trade-Off

Precision and recall describe different failure modes, and neither is universally better. Real projects require an explicit decision about which errors are more acceptable. That decision should guide threshold tuning, model selection, and how accuracy is ultimately judged.

Making Image Recognition Accuracy Practical at FlyPix AI

At FlyPix AI, we work with image recognition where accuracy has to survive real conditions, not just clean test data. Satellite, aerial, and drone imagery is complex by nature, so we focus on accuracy that holds up across environments, scale, and change.

We don’t treat accuracy as a single score. Our platform is built to help teams train custom models, validate detections visually, and iterate fast. By keeping domain knowledge close to the model and reducing the time it takes to test and retrain, we make accuracy something teams can actively work with, not just measure once.

Accuracy also doesn’t stop at deployment. As imagery changes over time, our workflows support continuous validation and retraining, so models stay aligned with real-world conditions instead of slowly drifting out of relevance.

Interpreting Core Accuracy Metrics Together

Once basic accuracy numbers are on the table, the real work begins. Image recognition systems rarely fail because a metric is missing. They fail because metrics are read in isolation. Precision, recall, F1 score, IoU, and mAP all describe different aspects of model behavior, and none of them are meaningful on their own. The goal is to understand how they interact and what they reveal when viewed together.

Using the F1 Score Without Losing Detail

The F1 score combines precision and recall into a single number. It is useful for comparisons, especially when neither metric should dominate.

However, the F1 score should never replace direct inspection of precision and recall. Two models with the same F1 score can behave very differently in practice. One may miss rare cases. Another may flood the system with false detections.

Treat the F1 score as a summary, not a conclusion.

Object Detection Accuracy Changes the Rules

Image recognition accuracy becomes more complex when object detection is involved. Detection systems must identify what is present and locate it correctly within the image.

Intersection over Union, or IoU, measures how well predicted bounding boxes overlap with ground truth. It turns accuracy into a spatial problem rather than a simple classification task.

Choosing IoU thresholds is not a technical detail. Loose thresholds can hide localization problems. Extremely strict thresholds can penalize detections that are good enough for operational use. In real projects, IoU should reflect how precise detections need to be, not what looks best in reports.

Mean Average Precision and Its Limits

Mean Average Precision, or mAP, is widely used because it combines detection confidence, ranking quality, and localization accuracy across thresholds. It provides a structured way to compare object detection models trained under similar conditions.

mAP is most valuable as a comparative metric. It helps teams understand whether one approach improves detection quality relative to another. What it does not guarantee is robustness. A model can score well on mAP and still fail under specific lighting conditions, environments, or object arrangements.

For this reason, mAP should be treated as a lens, not a verdict.

Always Look at Performance Per Class

One of the most common reasons image recognition systems fail is uneven class performance. Aggregated metrics hide this problem.

When evaluating accuracy, always inspect metrics per class. This reveals whether certain objects are consistently harder to detect or more likely to be confused with others.

This step often changes priorities. A model that looks strong overall may be unacceptable if it fails on the most important classes.

Confusion Matrices Turn Errors Into Patterns

Confusion matrices are one of the most practical tools for understanding how an image recognition model behaves. Instead of collapsing errors into a single score, they show how predictions move between classes, revealing structure in the mistakes.

What Confusion Matrices Reveal

By laying predictions against ground truth, confusion matrices help answer questions that scalar metrics cannot:

- Which classes are most frequently confused with each other

- Whether errors tend to be one-directional or mutual

- Whether mistakes cluster around visually similar or overlapping categories

Why This View Matters

These patterns often point directly to underlying issues, such as ambiguous class definitions, inconsistent labeling, or missing training examples. Because confusion matrices expose relationships between classes, they are especially useful when deciding whether to collect more data, refine labels, or adjust class boundaries.

Validation Only Works With Truly Unseen Data

Accuracy evaluation breaks down when validation data is too similar to training data. This happens more often than teams expect.

If augmented versions of the same images appear in multiple splits, or if data comes from the same narrow conditions, accuracy looks artificially high. The model is being tested on variations of what it has already seen.

A meaningful test set should differ in ways that matter. That can include different locations, devices, time periods, or capture conditions. Without this separation, accuracy evaluation becomes self-confirming rather than predictive.

Testing Under Real Conditions Changes Conclusions

Many accuracy issues only appear once models encounter real-world imperfections. Motion blur, noise, occlusion, compression artifacts, and poor lighting expose weaknesses that clean datasets never reveal.

Testing under realistic conditions often leads to uncomfortable but valuable discoveries. A model that performs well in ideal scenarios may struggle once conditions vary even slightly. Finding this before deployment saves time, cost, and credibility.

This stage does not require perfect simulation. It requires honest sampling of how images actually look in production.

Accuracy Over Time and the Role of Bias

Image recognition accuracy is not static. Real-world data evolves constantly, and models that are not monitored gradually drift out of alignment with reality. Seasonal changes, new hardware, environmental shifts, and changes in user behavior all affect how images look and how models interpret them. When accuracy is only checked at launch, this slow degradation often goes unnoticed until failures become obvious.

Post-deployment accuracy checks should focus on trends rather than isolated numbers. Gradual performance decline is often more dangerous than sudden failure because it hides behind familiar metrics. Continuous monitoring makes it possible to detect subtle shifts early and respond before accuracy drops below acceptable levels.

Bias plays a direct role in this process. Models trained on narrow or unbalanced data tend to perform well only under the conditions they have already seen. When new environments, object types, or visual patterns appear, accuracy metrics overstate reliability. Reducing bias improves coverage, but it also improves robustness. Fairer models are usually more stable over time and easier to maintain as conditions change.

Using Accuracy to Make Real Decisions

Accuracy metrics exist to guide decisions, not to impress stakeholders. Reporting should explain trade-offs, limitations, and known risks instead of hiding them behind a single number. When accuracy is presented without context, it creates false confidence and leads teams to overlook problems that surface later in production.

In practice, useful accuracy reporting should make the following clear:

- Which types of errors matter most and why they are acceptable or not

- Where the model performs unevenly, including classes or scenarios with lower reliability

- What conditions the evaluation reflects, such as data sources, environments, or time periods

- How performance is expected to change over time, and how it will be monitored

Clear, honest reporting builds trust across teams and leads to systems that are easier to maintain, improve, and rely on in real-world use.

When a Model Is Actually Ready

A model is ready when its behavior is understood, not when its metrics reach their highest point. High scores can hide fragile performance, especially if they come from narrow datasets or ideal conditions. What matters more is knowing how the model fails, where those failures occur, and whether they align with acceptable risk. Predictable errors can be managed through thresholds, workflows, or retraining. Unknown errors surface later, usually when the cost of fixing them is higher.

Real readiness comes from disciplined evaluation rather than optimistic interpretation. That means testing under realistic conditions, validating against truly unseen data, and monitoring performance after deployment. A model that is continuously observed and adjusted is far more reliable than one that simply looked strong at launch.

Final Thoughts

Checking image recognition accuracy in real projects is not about finding the highest score. It is about understanding how a system behaves when reality intervenes.

Metrics are tools. Used carefully, they reveal strengths and weaknesses. Used carelessly, they create confidence without reliability.

The difference between a demo and a dependable image recognition system is not architecture. It is how honestly accuracy is measured, tested, and maintained over time.

Frequently Asked Questions

There is no single best metric. Overall accuracy can be useful as a quick signal, but it is rarely enough on its own. In real projects, accuracy should be evaluated using a combination of precision, recall, and task-specific metrics like IoU or mAP for object detection. The right mix depends on what kinds of errors matter most in your use case.

This usually happens when evaluation data is too similar to training data or does not reflect real conditions. Clean images, limited environments, or data leakage between splits can inflate accuracy scores. Once the model encounters new lighting, angles, noise, or environments, weaknesses appear that were never tested for.

It depends on the cost of errors. If false positives trigger manual review, alerts, or automated actions, precision matters more. If missing objects creates risk or blind spots, recall is more important. Most real systems require a conscious trade-off rather than optimizing one metric blindly.

No. The F1 score is useful for comparison, but it hides how precision and recall are balanced. Two models with the same F1 score can behave very differently in practice. Always look at precision and recall separately before making decisions.

Accuracy should be checked regularly after deployment, not just once. The right frequency depends on how fast data changes, but any system exposed to new environments, seasons, or hardware should be monitored continuously. Slow performance drift is common and often goes unnoticed without tracking trends.